Tseng Hui-wen, Chen Wei-ting

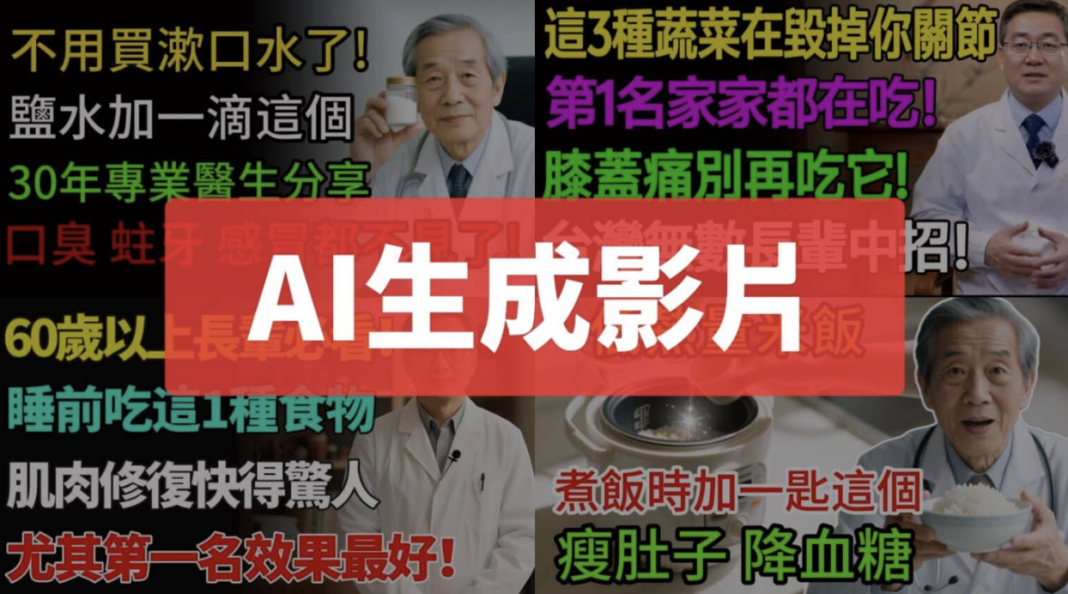

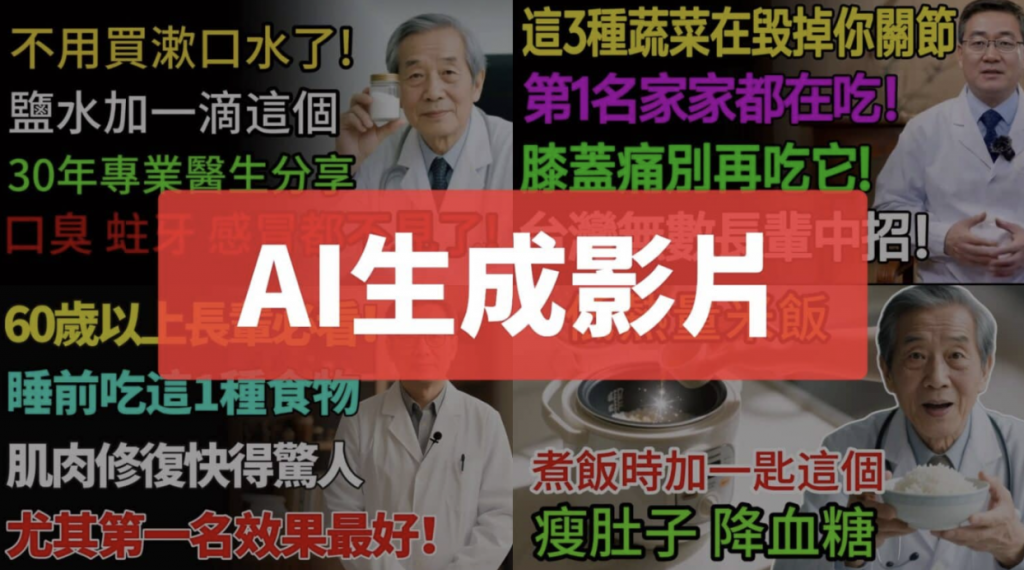

Have you noticed that when searching for health information on video platforms, you often find attention-grabbing, sensational videos that overturn conventional wisdom? When you click on them, they usually quote what appears to be a senior doctor, then cite several cases and “authoritative” medical studies, leaving viewers alarmed.

But if you watch a few more, you’ll notice that these videos tend to follow the same structure and use an almost formulaic pattern. It turns out they are AI-generated videos.

Although their content is often riddled with errors, it is packaged in a professional, serious tone, making it difficult for viewers to tell what is true and what is false. Experts warn that because AI video production is cheap and fast, it has become an industry model. The information in these videos is often a mix of truth and falsehood, so the public should stay alert and avoid believing or forwarding it uncritically.

Three key traits of AI-generated health videos

The Taiwan FactCheck Center has observed that AI-generated health videos mushrooming online usually share three features: sensational, clickbait-style headlines; fictional characters and scenarios; and vague, nonspecific claims.

Take the video claiming that “microwaving leftover rice is harmful to health” as an example. Its title warned that refrigerated rice must not be reheated in a microwave and promised to teach viewers how to cook low-calorie rice that could lower blood sugar – a message highly effective at grabbing attention. A closer look at the video, however, shows that the speaker first claims to be an 85-year-old doctor with 55 years of practice, then two minutes later changes this to 35 years of practice, a clear contradiction that reasonably suggests the person is fictional.

The video also refers to numerous studies – such as “a 2022 study by Harvard T.H. Chan School of Public Health,” “a Johns Hopkins Medicine study,” “a study by Professor Yamada’s team at the University of Tokyo in Japan,” and “a University of Oxford study” – but these references are vague and lack precise citations. Fact-checkers searched with various keywords but could not locate any matching publications.

YouTube hosts many similar channels that upload large numbers of health videos in the same style, using sensational, exaggerated titles such as “Eating These 3 Vegetables Destroys Your Joints,” “Older Adults: Eat These 8 Foods Before Bed and Build Muscle While You Sleep”, “Salt Water Is the Strongest Mouthwash,” and “The Shocking Secret of Basil.” All are designed to capture attention, and FactCheck Center’s verification work has found that they mix in a substantial amount of false information.

When AI health advice misleads behavior

National Yang Ming Chiao Tung University assistant professor Lin Yu-cheng, of the Institute of Oral Tissue Engineering and Biomaterials, notes that in medicine, correlation does not equal causation. When doctors or scholars discuss medical research, they are usually very cautious because medicine is continually advancing, and many studies remain inconclusive. If people encounter online videos that make absolute claims and clash with everyday experience – such as “microwaved rice is harmful to health” or “eating eggplant will destroy your joints” – there is a high chance that something is wrong, and such claims should not be believed or forwarded immediately.

Huang Shu-hui, a nutritionist at Taipei Post Hospital, has also observed the flood of AI health videos. She warns that these videos often “infinitely amplify the benefits of a single ingredient” and use “half-true, half-false claims” – a common tactic in health misinformation – and viewers should be vigilant when they encounter such content.

Huang Po-yao, assistant professor at National Taiwan University’s Institute of Health Behavior and Community Sciences, points out that this type of AI health video often oversimplifies complex relationships between health behaviors and risk, offering an overly reductive promise: “Just do A, and you can achieve B.”

Huang adds that when more and more people rely on these “quick fixes” instead of professional medical advice, the consequences may range from inappropriate diets or self-harming behaviors to increasing information inequality and irreversible harm to health. Some people may delay seeking medical care because they strongly believe the content of AI videos, missing the golden window for treatment. This poses challenges for both personal health and public health governance.

When AI videos production becomes an industry

Experts from Taiwan’s National Institute of Cyber Security say many of these videos are likely made with software such as “Jianying,” which uses an end-to-end workflow covering content generation, visuals, editing, and voiceover. This makes it possible to mass-produce similar videos in a short period of time and distribute them across multiple platforms.

They note that with the combination of editing software and AI-driven mass production, the cost of producing AI videos has fallen to almost zero. Such content often looks accurate and professional but frequently mixes fictional and misleading information – so-called AI “hallucinations.” The difficulty of telling truth from falsehood is one of the key dangers of this technology.

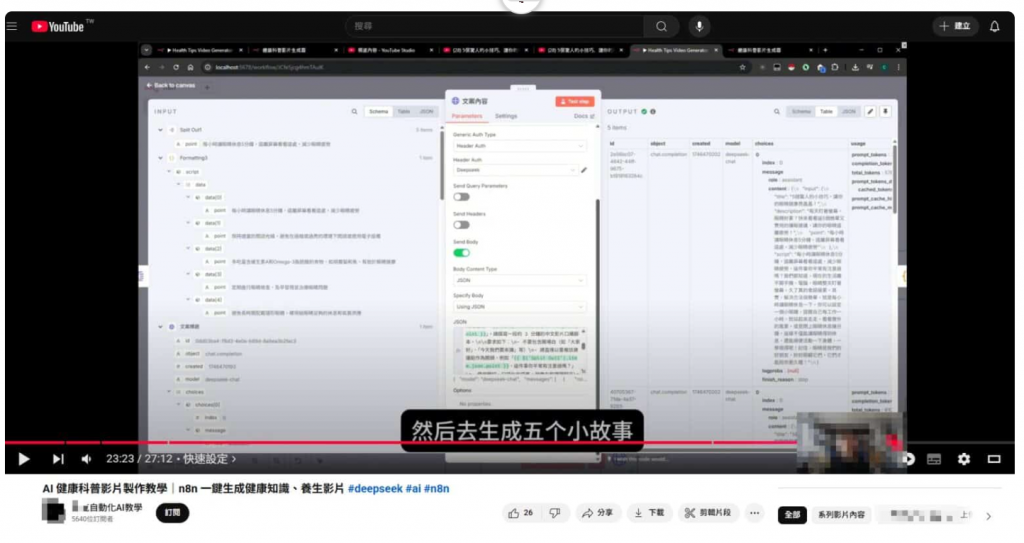

The Fact-Checking Center has also observed that “teaching and assisting users to create videos with AI” has already become an industry in its own right. Many paid AI creation tools provide a variety of video templates and formats, such as digital avatar voiceovers, text-to-animation functions, and automatic editing that turns images and copy into videos. Users may need only to enter a single sentence to generate a script, which can then be automatically transformed into a short AI video with a click.

Moreover, some people use DeepSeek to offer similar “health video workflow” services. Once users pay to become members and fill in a form specifying the topic – such as eyes, heart, or lungs – and the type of video, such as health advice or possible consequences, the system can automatically generate a title (for example, “5 Simple Ways to Protect Your Eyes and Stop Your Vision from Worsening”), along with an introduction and a full script. The text is then passed to an AI-voice-generation system for narration and combined with images and other matching materials, automatically synthesizing a complete health explainer video.

This workflow is not limited to health topics; by changing the prompt, it can easily be applied to finance, everyday tips, education, policy and regulations, pets, ghost stories, and more. Because AI video generation can now be done with no manual editing or scriptwriting, production barriers are extremely low. In recent years, such videos have flooded platforms like YouTube and then spread further onto personal Facebook pages and into groups.

How AI videos exploit the “emotional brain”

AI videos driven by fictional stories are designed to activate the “emotional brain.” National Yang Ming Chiao Tung University assistant professor Hsu Chih-chung recalls that as early as the 2021 “Xiaoyu deepfake face-swap incident,” tutorials on deepfake face-swapping were already circulated and were gradually developing into an industry. He says AI-generated videos spreading across platforms are primarily designed to earn traffic, so the topic must be eye-catching and distinctive. Themes change in waves to maintain novelty, and sensational health videos are one example that easily captures public attention.

Hsu believes that in the case of health videos, the people who produce and share them may not always be acting out of malice; they may themselves lack the ability to distinguish truth from falsehood. Because AI content is generated based on human instructions, the system itself bears no responsibility for what it produces. As a result, these AI-generated health videos often contain a mix of plausible and false material and should not be trusted.

He also explains that people’s “emotional brain” usually reacts faster than their “rational brain,” so these videos often begin with fictional characters, settings, or storylines before moving on to their main argument. Once viewers’ emotions have been engaged, they are less likely to question whether the claims are true.

For example, such videos might open with: “I am Dr. Zheng Sheng‑ming, 85 years old, with 55 years of clinical practice”; “Mr. Lin from Taichung, 70 years old, is extremely careful about healthy eating… six months later, his knees became so swollen and painful that he needed a cane to walk”; or “After taking diabetes medication for 12 years, Ms. Lin Mei‑chiao, 67, saw her condition worsen, but after changing only the way she cooked rice for five months, she experienced a miracle…” These narratives are designed to draw viewers step by step into the fictional world created by AI.

Huang Po-yao also said that this kind of AI health video often gives people the illusion of being “human-like,” carrying “scientific authority,” and offering a “one-stop solution” to complex health issues in a very short time. It is similar to some smooth-talking pseudo-experts or influencers who seem trustworthy but in fact lack the relevant professional background.

This sense of credibility is part of what makes AI-generated health misinformation persuasive: it mimics expert language, uses structured explanations, and presents itself as comprehensive, even when the underlying claims are unreliable.

When the internet is flooded with AI content, literacy and education are the real remedies

Beyond the proliferation of AI‑generated health videos, many people now also turn to AI for “medical consultations,” describing their discomfort or concerns to an AI system in hopes of getting answers. Hsu Chih‑chung reminds users that even if AI seems correct most of the time, “being right most of the time does not mean it is always right.”

According to Huang Po‑yao, AI “consultations” can be divided into two levels. The first involves relatively common health behaviors, such as using AI tools to seek information on fitness, nutrition, or general symptoms; this carries a relatively low risk because, if the advice is wrong, the body often reacts quickly. The second level is much riskier and involves mental health and medical judgment. Research and reporting have shown that AI may sometimes respond inappropriately, even appearing to encourage self‑harm or suicide. In such cases, the use of technology in self‑care and intimate interaction is shifting from “I want to talk to a person” to “I think I am talking to a person.”

Huang explains that AI algorithms are not designed to “care” about humans; they are designed to produce predictable outputs. AI can rapidly generate large amounts of plausible advice, but it lacks the context and clinical reasoning required for sound medical judgment. When people rely too heavily on AI tools to interpret their symptoms, they may delay seeking professional help and miss the best time for treatment. This not only misleads individual health behaviors but may also weaken trust in professional healthcare systems and complicate the implementation of public health policies.

Huang also observes that in the earlier digital era, the spread of health information remained closely tied to interpersonal care, whereas in the AI era, such interactions increasingly resemble digital performances. Human‑AI interaction raises privacy and data‑collection concerns: people’s health information and private conversations may become data resources used for purposes other than care itself.

He stresses that the “human‑like feel” and “illusion of scientific authority” created by AI videos do not constitute genuine scientific judgment or care. As AI becomes more deeply embedded in everyday life, building robust AI literacy will become a key public health challenge.

Hsu Chih‑chung adds that AI‑generated content is already ubiquitous, so the public must first develop the awareness that much of what they encounter online may have been produced by AI, rather than accepting everything at face value. Looking ahead, an ever‑greater share of online content will be AI‑generated. As AI advances and AI content surges, human learning becomes even more important: only through education, ongoing learning, and constructive use of AI tools can people build the ability to judge what is true and what is false.

Reposted from the TFC website in collaboration with StopFake as part of the Ukraine–Taiwan Initiative for Election Information Resilience.