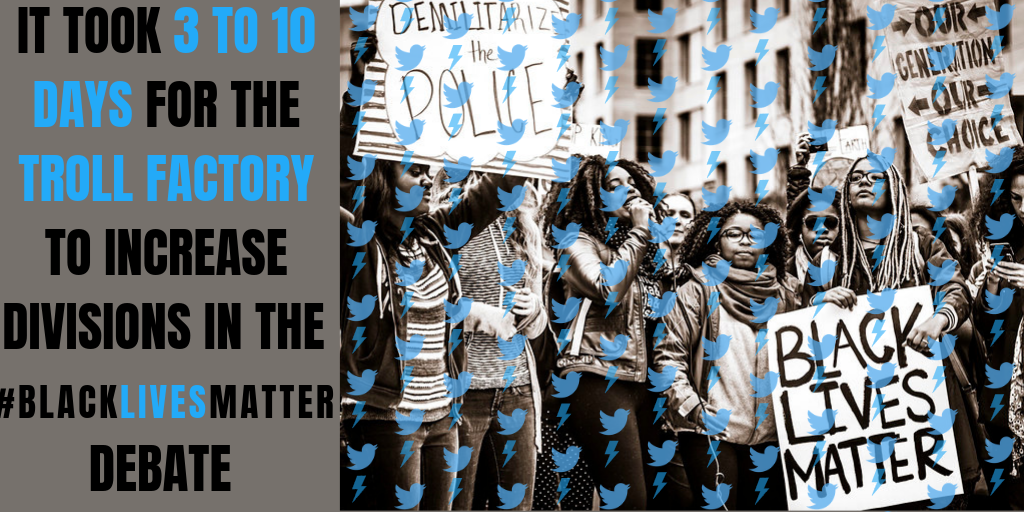

It took 3 to 10 days for the Russian Internet Research Agency to increase polarization of the #BlackLivesMatter debate on Twitter, research by scholars from Oxford and Amsterdam universities shows.

Using novel computation techniques and statistical methods, the researchers investigated the impact of the Russian IRA tweets on the entire #BlackLivesMatter conversation, showing that the activity of the Kremlin’s trolls had a measurable effect on a genuine online conversation.

The Internet Research Agency – more widely known by its moniker “the troll factory” – is infamous for conducting complex online influence operations to sway elections and manipulate public opinion in Russia and abroad. It attempted to manipulate US voters ahead of the 2016 US presidential election by exploiting existing fault lines and partisan issues, creating false online personas and websites, impersonating local news outlets, and cooperating with Russia’s military intelligence service to disseminate information hacked from several key Democratic groups.

The Black Lives Matter movement in the US spread online and offline to protest police violence against African-Americans, drawing numerous supporters as well as opposition. The Special Counsel investigation in the US revealed that the Russian IRA accounts imitated Twitter users on both sides of the debate and disseminated provocative messages to polarise the discussion, e.g. to stoke antagonism and divisions between opposing groups.

Now the research decisively shows that there was a correlation between the IRA activity and the polarisation effect on Twitter. The effect did not occur immediately, but predominantly 3 to 10 days after conversation manipulation had taken place. The polarisation reached a peak around 7 to 9 days following the troll activity, before returning to the initial base level. The correlation was most pronounced around periods of the highest Russian activity, suggesting that the more active the trolls, the greater the influence on the genuine conversation.

IRA Twitter accounts tweeted both in support (left) and opposition (right) of the #BlackLivesMatter movement. Screenshots from: “Acting the Part: Examining Information Operations Within #BlackLivesMatter Discourse”, A.Arif, L.Stewart, K.Starbird.

By increasing the polarisation of the Twitter conversation, the IRA created favorable circumstances for disseminating disinformation. Sharp divisions among disputing groups increase acceptance of false and misleading information that confirms already existing beliefs – it is a psychological phenomenon known as “confirmation bias“. Furthermore, polarisation facilitates repeated exposure to the same inaccurate information, because alternative perspectives are effectively removed from the discussion.

Toxic conversations

The troll factory operations were not limited to Twitter. In 2018, Reddit identified 944 IRA accounts that produced over 16 800 posts on the social network.

Research into the troll factory’s activity on Reddit revealed that conversations (“threads”) initiated by IRA accounts were more toxic than conversations started by genuine users, and had more instances where people were attacked online because of their identity – e.g., their political views, race, or affiliation with a particular group.

“Toxicity” is not an accidental choice of word. Aiming to promote better discussions online, Google designed a tool which uses machine learning models to assess the effect a comment might have on an online conversation. The ruder, more aggressive, or more unreasonable the comment, the higher the “toxicity” score. The same tool can also measure the degree to which negative or hateful comments target someone because of their identity.

The scholars also found evidence suggesting a causal relationship between Russian IRA activity and the quality of the online conversation. Across all investigated conversations (“subreddits”), troll interference decreased the recognition of multiple perspectives, making discussions more prejudiced and biased. And while these findings require further research, they demonstrate that any measurable effects of Russian IRA activity appear to undermine the quality of online conversations.

Based on: “Measuring the effect of Russian Internet Research Agency information operations in online conversations”, John D. Gallacher, Marc W. Heerdink, Defense Strategic Communications, NATO Strategic Communications CoE, Spring 2019.