by Sopo Gelava for DFRLab

More than one hundred Facebook assets promoted links to external websites sharing pro-Kremlin propaganda in Bulgaria.

Cover photo: Journalists record Italian Air Force Eurofighter Typhoon fighter jet during Dragon 24 military exercises in Poland on March 14, 2024. (Source: Dominika Zarzycka / SOPA Images via Reuters Connect)

The DFRLab identified a cluster of Facebook assets amplifying websites that target Bulgarian audiences with misleading and sensational content that often aligns with Kremlin propaganda. The cluster contains at least forty-four Facebook pages, thirty groups, and twenty-eight accounts. The cluster’s activity is primarily amplification of the external websites, which the Bulgarian organization Foundation of Humanities and Social Studies (HSSF) described as “mushroom websites” in 2023. The term highlights the phenomenon where certain domains appear, then become inactive over time, only to be rapidly replaced by new ones.

According to HSSF, up to 400 anonymous websites with similar designs monetize Kremlin propaganda. The website network is centered around four primary domains – allbg.eu, bgvest.eu, dnes24.eu, and zbox7.eu. Each had hundreds of subdomains operating as independent websites and publishing identical content. In 2023, the network of mushroom websites published more than 350,000 news articles, which appeared to be generated through automated means. According to HSSF, “mushroom websites have been the most powerful media tool of online dissemination in Bulgaria, particularly of propaganda materials.” HSSF reported that the network used the Share4pay platform to advertise content. In 2023, the Center for the Study of Democracy (CSD) additionally reported a link between the network and AdRain.bg, a digital advertising platform in Bulgaria run by Bozhidar Kostov.

In 2023, the local fact-checking platform Factcheck.bg reported that the network of mushroom websites disseminated Kremlin disinformation that claimed children of Ukrainian refugees in European Union countries were taken from their parents. Additionally, in 2022, the Ukrainian fact-checking organization StopFake reported that Bulgarian websites, including those within this network, spread disinformation alleging that Ukraine had committed genocide against the people of Donbas.

The ‘mushroom’ websites

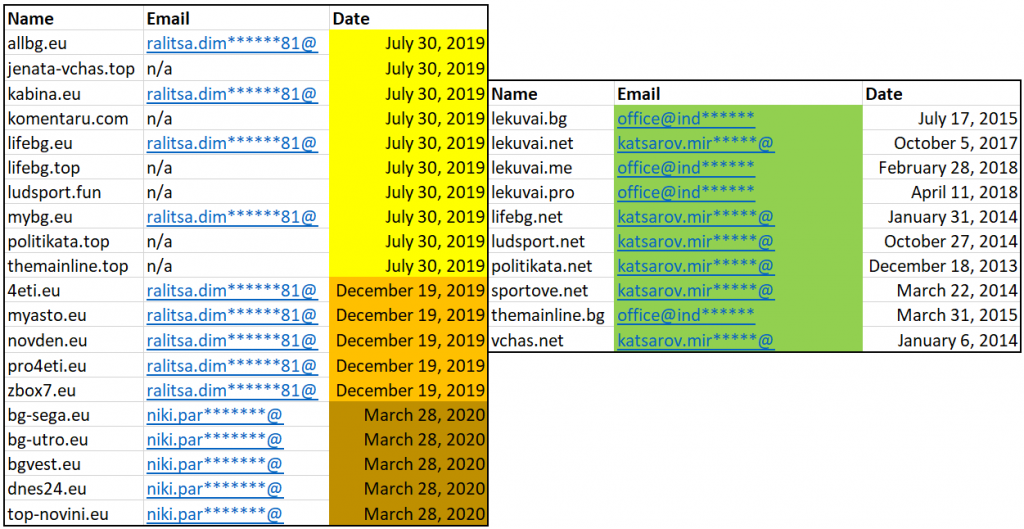

The DFRLab analyzed the creation dates and registration information for forty-six websites in the network that are connected by advertising trackers and registrant emails. Half of the websites (23) were registered using .eu domain extensions. Most websites were created between 2018 and 2020, with some created in batches on a single day by the same registrant, indicating a high likelihood of centralized coordination. By checking registration data using Iris Domain Tools, we found that emails linked to the AdRain advertising platform registered at least ten websites in the network between 2013 and 2018.

The DFRLab analyzed the content of these websites and found that they targeted Bulgarian audiences with various topics ranging from foreign and domestic political developments to sports, entertainment, lifestyle, and more. The content on the websites was not always inaccurate; however, political content was often presented sensationally and misleadingly, using click-bait headlines that echoed the Kremlin’s agenda and perpetuated propaganda.

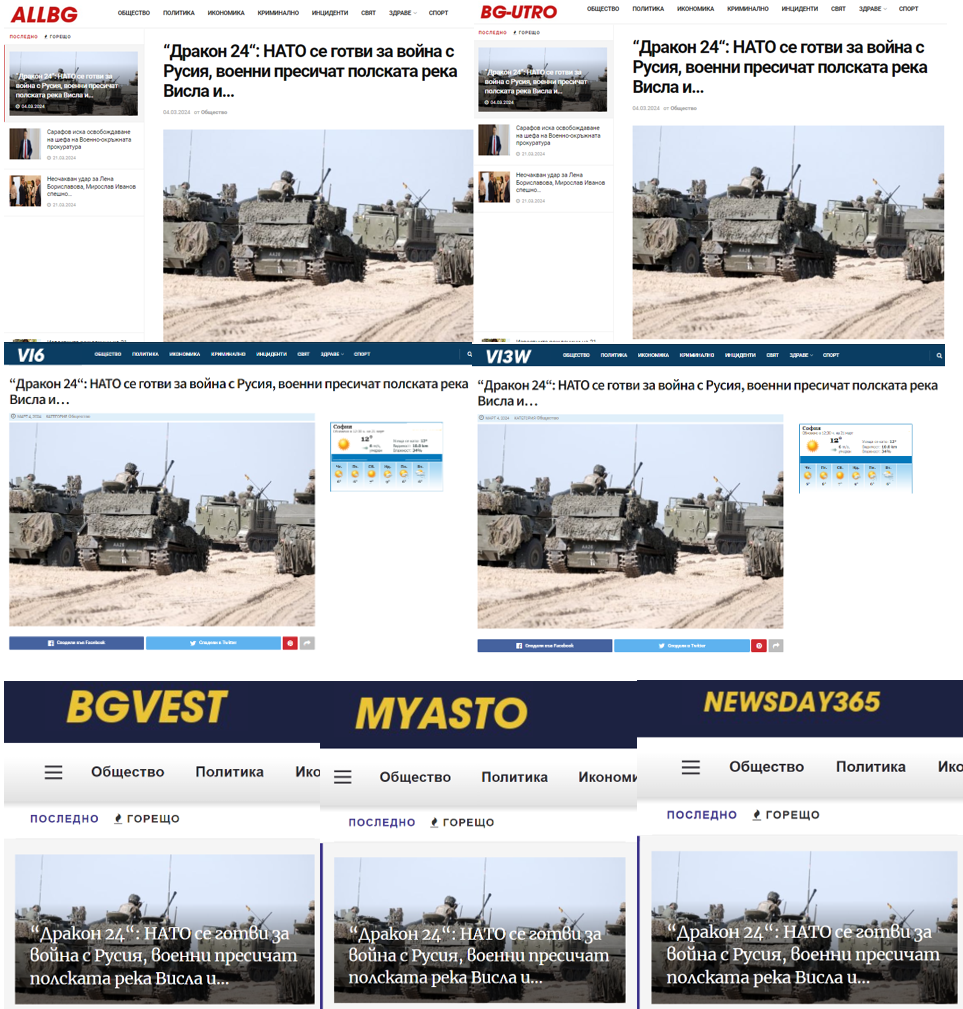

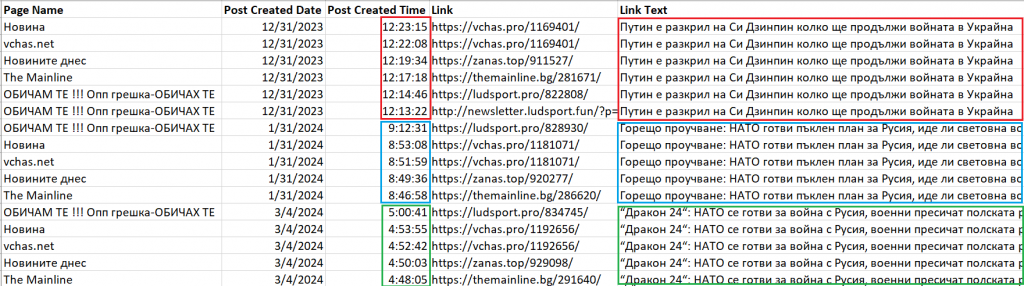

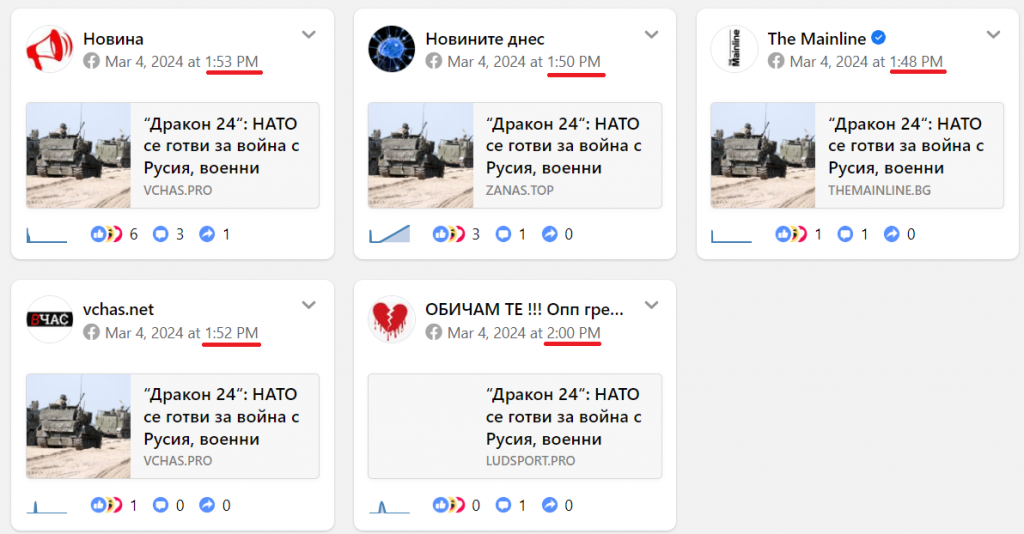

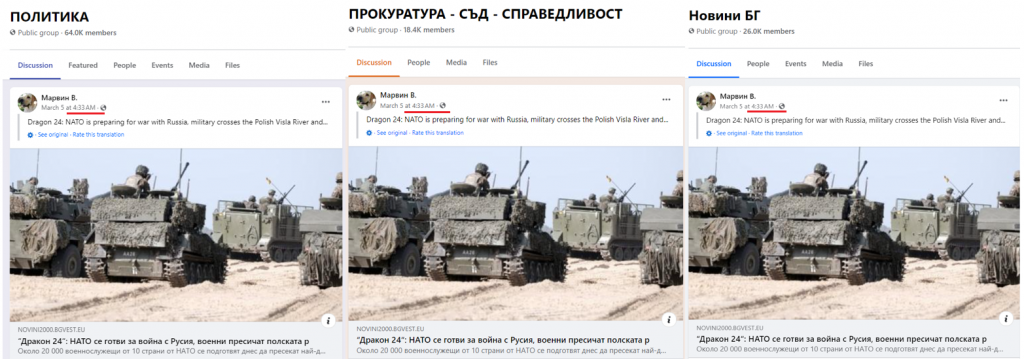

One of the most recent narratives we identified centered around the Dragon 24 military exercises held in Poland on March 4-5, 2024. Dragon 24 is an operational and tactical military exercise that is part of NATO’s Steadfast Defender 24 program. At least twenty-five Bulgarian websites published identical content about Dragon 24 with the misleading headline, “Dragon 24: NATO prepares for war with Russia, soldiers cross the Polish Vistula River and….” The headline is intended to imply the military exercises at the river crossing were in preparation for a war with Russia. The incomplete headline trails off as a click-bait tactic to encourage users to read further.

Amplification on Facebook

By tracking the amplification of the Dragon 24 articles on Facebook, the DFRLab uncovered a cluster of 102 Facebook assets used to amplify the mushroom websites, including forty-four pages, thirty groups, and twenty-eight accounts. As some pages and groups were dormant or inactive, the accounts also disseminated the mushroom websites in Facebook groups beyond this cluster. This indicates that the accounts capitalized on existing Bulgarian Facebook groups with thousands to tens of thousands of members to promote the websites. Additionally, these accounts shared links in Facebook groups supporting the Kremlin and pro-Kremlin political parties in Bulgaria.

Facebook pages

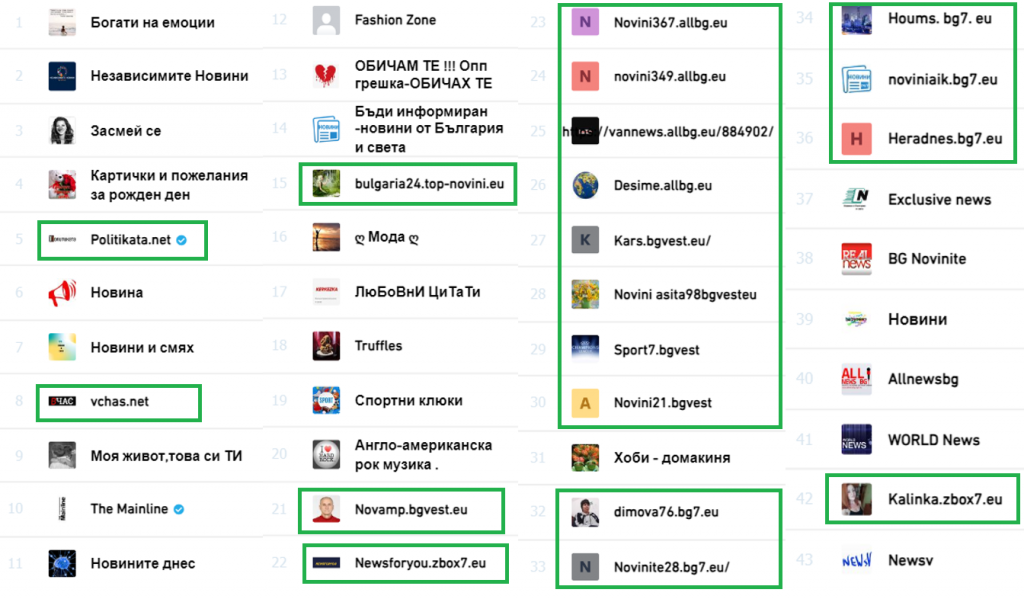

Nineteen pages within the cluster incorporated the domains of the associated websites into their names. However, most of these pages had close to zero activity.

Twenty-one pages in this cluster registered on Facebook as “media/news companies,” “news & media websites,” or “magazines.” Other page categories varied but included “food & beverage,” “athletes,” and “just for fun.”

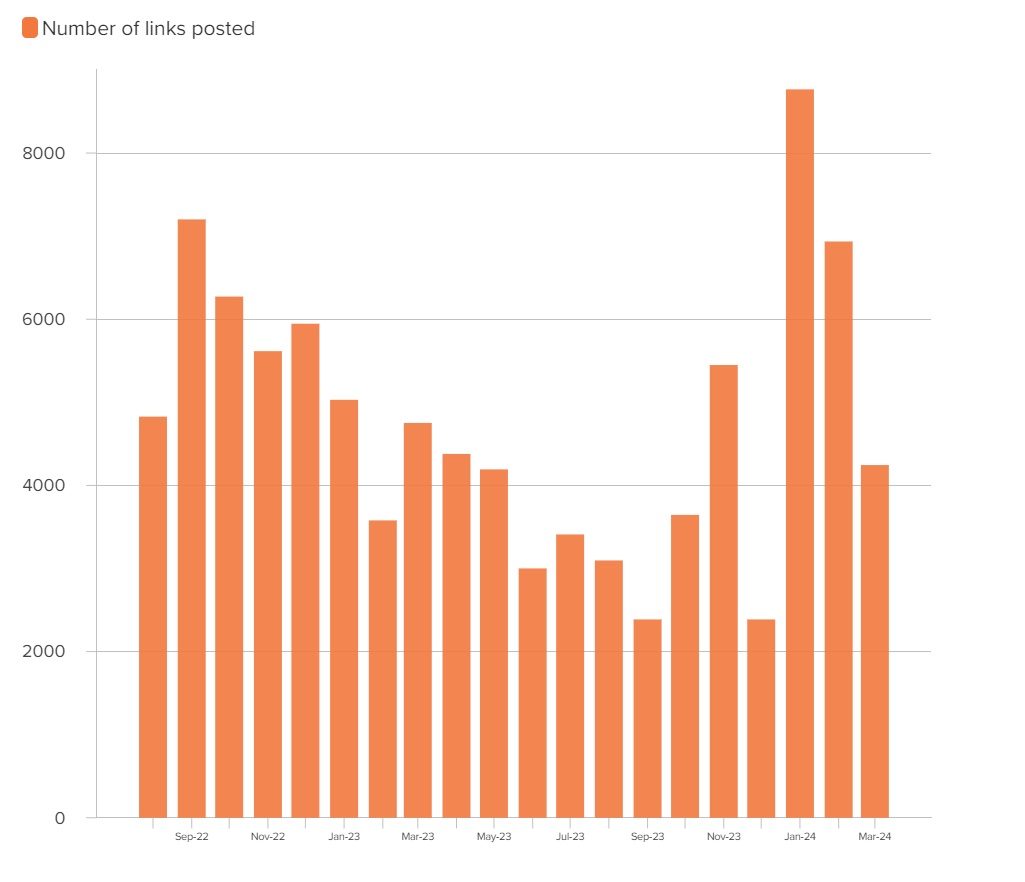

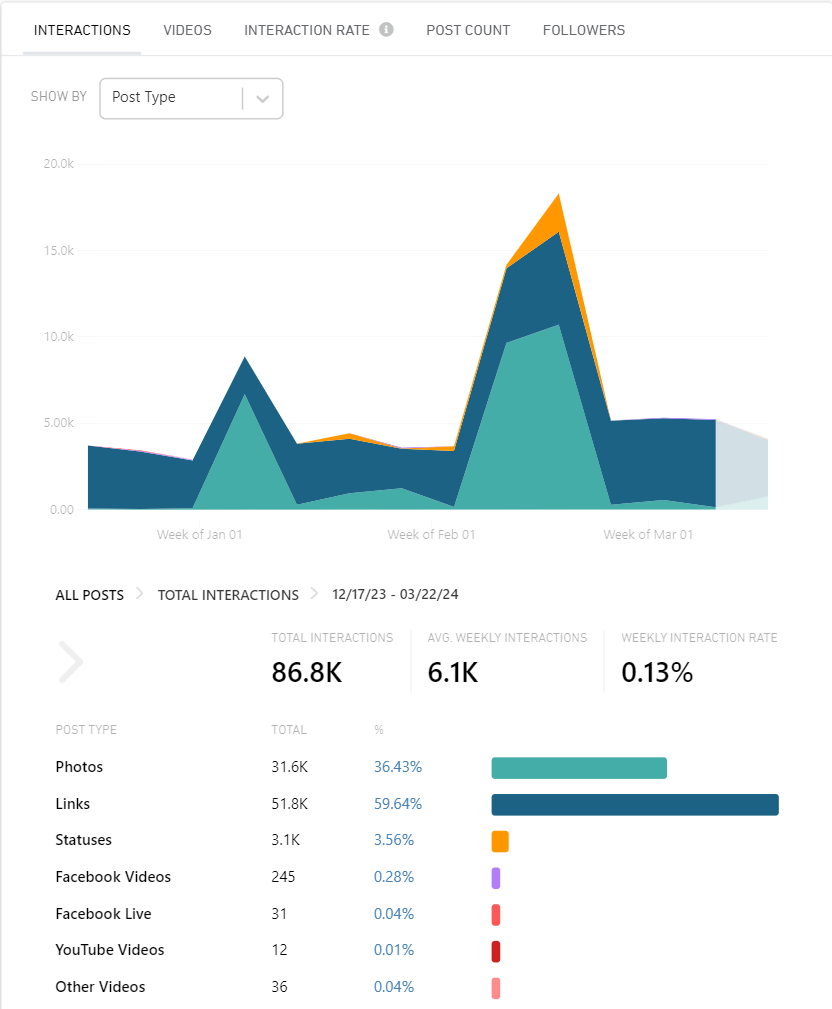

Using historical data from the social media monitoring tool CrowdTangle, the DFRLab analyzed the posting activity of the Facebook pages between August 1, 2022 and March 25, 2024, as this was the period for which data was fully available. In total, the pages posted 95,122 posts containing links to the mushroom websites. The highest number of links were posted in January 2024, with 8,767 posts, followed by October 2022, with 7,202 posts. On average, the pages posted 4,700 links per month.

In some cases, the pages posted links leading to identical articles within a short period, typically spanning one to ten minutes, which is another indicator of the assets possibly being centrally coordinated. Only five pages shared the misleading article on the NATO Dragon 24 exercises. Notably, one of these pages, ‘The Mainline,’ was verified with Meta’s blue badge on Facebook.

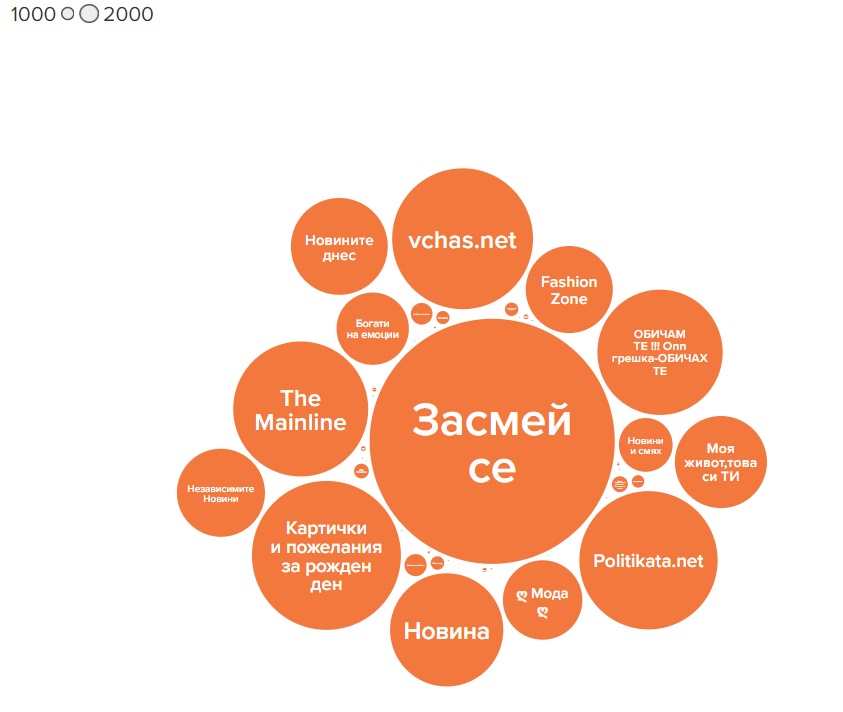

While most pages generated interactions through shared links, the two pages with the highest number of followers, “Засмей се” (“Laugh”) and “Картички и пожелания за рожден ден” (“Birthday cards and wishes”), 330,000 and 123,000 followers respectively, predominantly posted photos. Three other pages, “vchas.net,” “politataka.net,” and “The Mainline,” had more than 100,000 followers each. The follower count for nine of the pages ranged from 10,000 to 87,000. Twenty pages within the network had fewer than 100 followers.

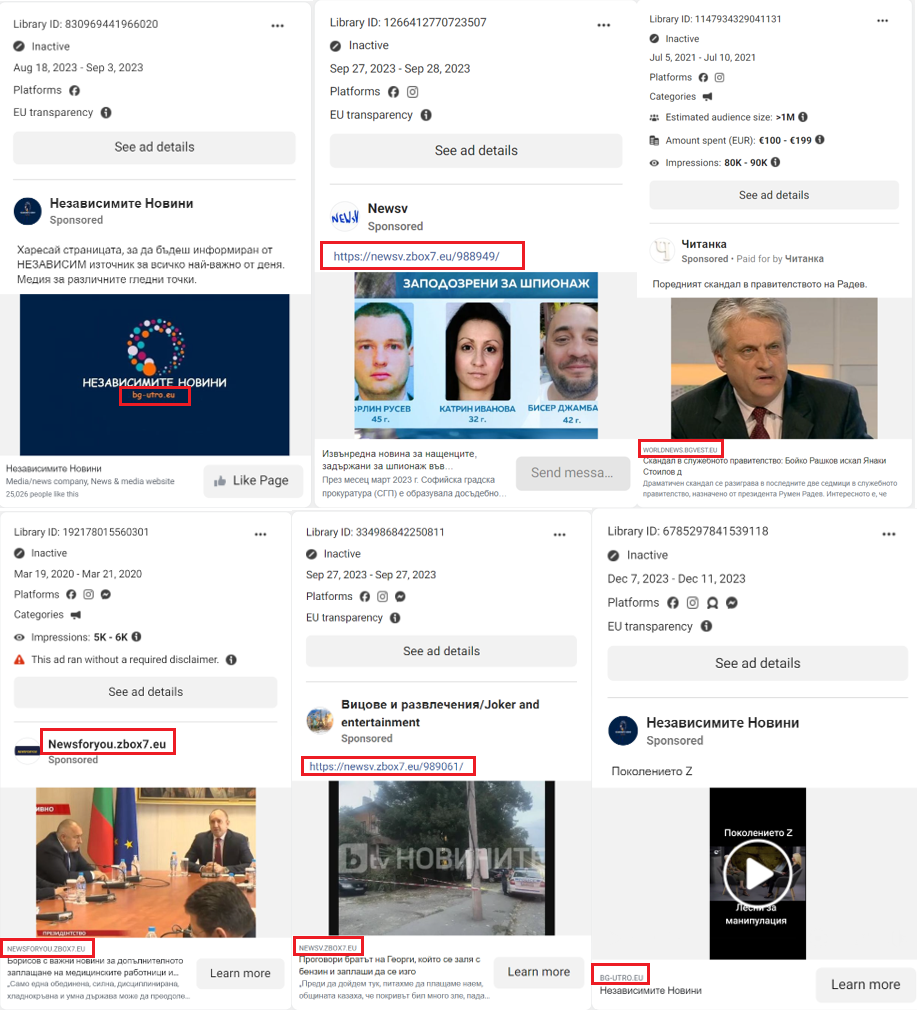

The DFRLab also discovered that between 2020 and 2023, at least three websites — “zbox7.eu,” “bgvest.eu” and “bg-utro.eu” — were amplified to Bulgarian audiences via Facebook ads. Four ads ran without the disclaimer that is required when posting ads about social issues, elections and politics. Two ads were subsequently removed. The remaining ads provided only the page’s name in the beneficiary and payer fields.

Accounts

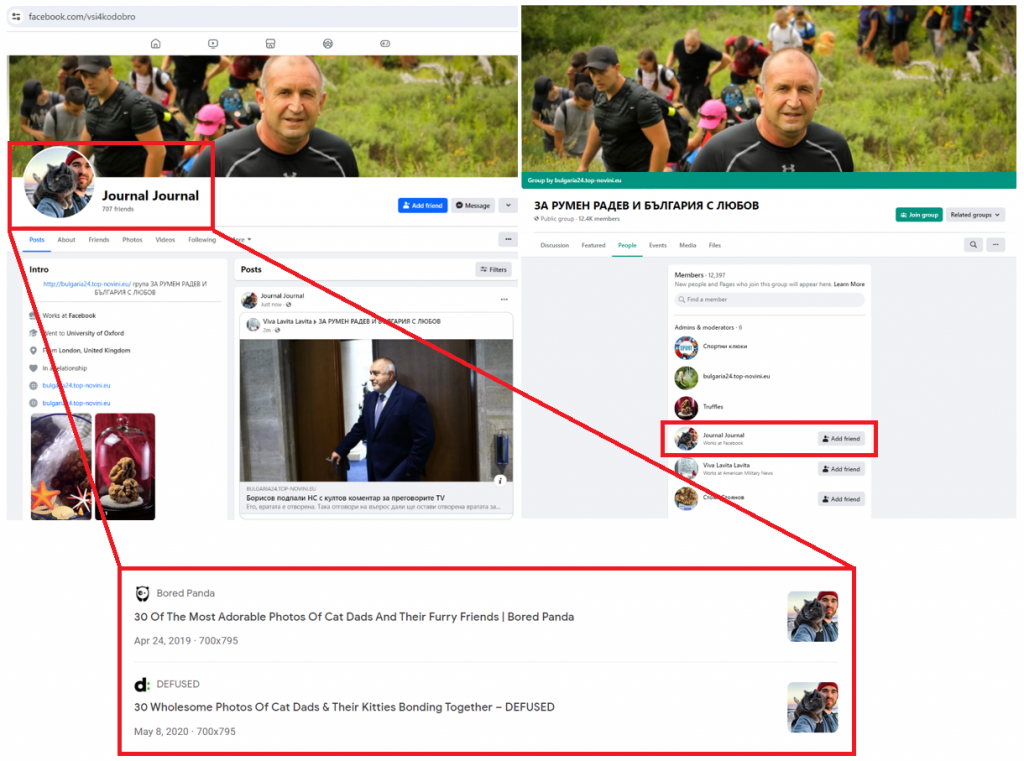

At least seventeen of the identified twenty-eight accounts displayed signs of possible inauthenticity, including discrepancies between profile names and URLs, generic photos sourced from elsewhere on the internet, and multiple accounts with similar names. These indicators, combined with the coordinated promotion of identical websites and managing groups within the network, suggests that the identified accounts could be inauthentic. Three accounts in the cluster used the same profile or cover photo as the groups they managed.

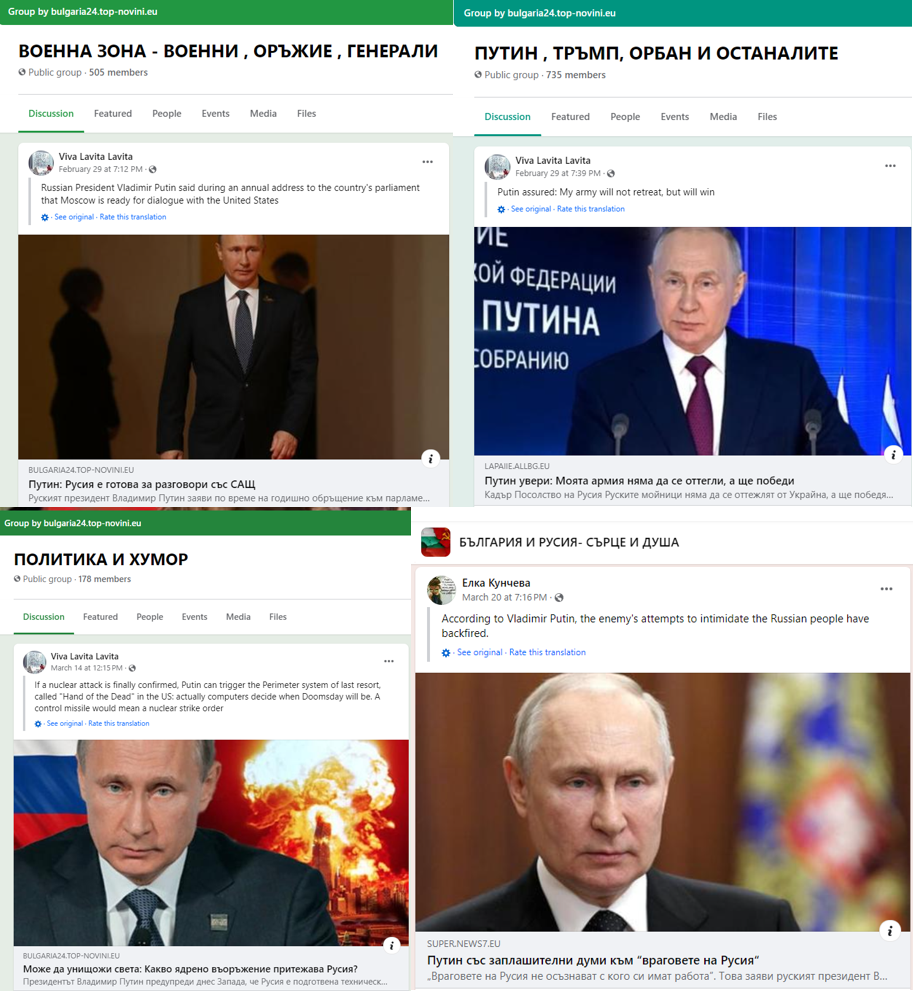

The DFRLab also discovered three accounts sharing almost identical names with slight variations. These accounts managed groups within this network that openly supported the Kremlin, and they promoted statements from Putin or other leaders supporting the Kremlin’s agenda. Additionally, the cover photo of one of the accounts mocked European Union values, while another cover photo expressed opposition to “participating in medical experiments,” likely referring to COVID-19 vaccines.

Accounts played a significant role in promoting the mushroom websites on Facebook. While most pages and groups within the network had low engagement rates and few followers, the accounts worked to promote the links beyond the assets in the cluster by sharing links in popular Bulgarian groups. For example, a suspicious account named “Марвин В.” posted within seconds the misleading article about the Dragon 24 exercises in at least three groups outside of the cluster.

Facebook groups

A single page, “bulgaria24.top-novini.eu,” managed twenty-four of the twenty-eight groups in the cluster. Using CrowdTangle data, the DFRLab discovered that the groups appeared to be primarily created to promote links to the external websites. An analysis of the posting behavior between December 22, 2023, and March 22, 2024, demonstrated that up to 60 percent of group posts contained links to the external websites. Photos, which comprised 36 percent of the total posts, were predominantly shared in two groups, “Картички и пожелания за рожден ден” (Birthday cards and wishes) and “Бабини илачи” (Grandmother’s cures), which are the two largest groups in the cluster, amassing 241,000 and 184,000 followers respectively. The next largest groups, “ЗА РУМЕН РАДЕВ И БЪЛГАРИЯ С ЛЮБОВ” (“FOR RUMEN RADEV AND BULGARIA WITH LOVE”) and “Вкусни рецепти, билки и полезни съвети” (“Delicious recipes, herbs and useful tips”), each had more than 10,000 followers. The remaining twenty-six groups had followings ranging from 3,000 to 5,000 accounts.

The names of the groups varied but some were dedicated to promoting Bulgaria’s pro-Russian President Rumen Radev, Russian President Vladimir Putin, and establishing closer ties between Russia and Bulgaria, as well as other unrelated topics such as the mafia and Pablo Escobar, recipes, sports, and more. This diversity in group names is a common tactic to attract audiences across a broad spectrum of interests. The accounts within this cluster managed the identified groups and shared links to websites within those groups. Much of the content being linked consisted of direct translations of statements made by Russian leaders.

by Sopo Gelava for DFRLab

Sopo Gelava, “Suspicious Facebook assets amplify pro-Kremlin Bulgarian ‘mushroom’ websites,” Digital Forensic Research Lab (DFRLab), March 26, 2024, https://dfrlab.org/2024/03/26/suspicious-facebook-assets-bulgarian-mushroom-websites.