Videos are circulating online in which individuals posing as Ukrainian soldiers claim that the authorities are deliberately prolonging the war because it benefits them. In reality, these videos were created using artificial intelligence and have no connection to real servicemen. Their purpose is to demoralize society and promote the narrative that the war is “profitable for the authorities” and that resistance is pointless.

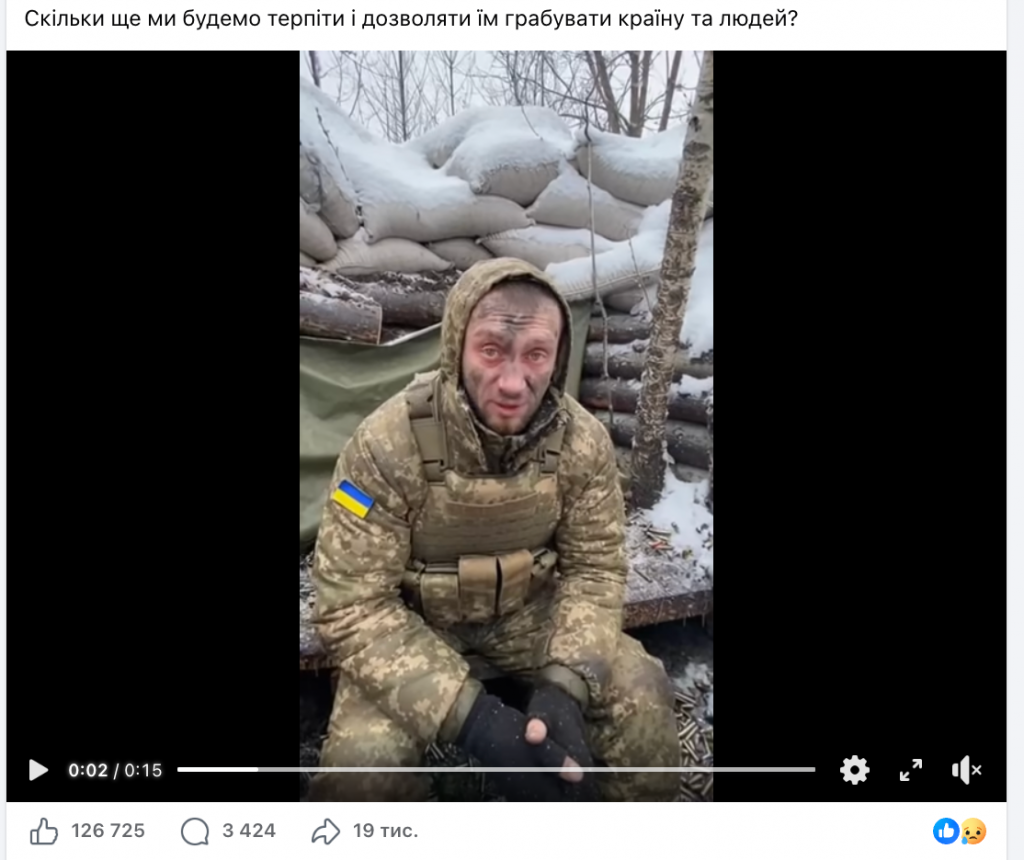

Short videos are actively spreading online in which alleged Ukrainian soldiers make harsh statements against the Ukrainian government. In these videos, it is claimed that the government has “looted the country” and is intentionally continuing the war because it is “profitable for Zelenskyy,” that “Ukrainians are being ground into fertilizer,” and that wounded soldiers are allegedly forced to pay for treatment in hospitals. Some videos also contain threats against Territorial Recruitment Center (TCC) employees and calls to “hide,” claiming that “there will be peace in six months and there is no point in dying for this government.”

All these publications share three key propaganda narratives: that the authorities are deliberately prolonging the war because it benefits them; that TCC employees are “abusing” people and “will be punished”; and that the state is corrupt, therefore “there is no point in fighting for Ukraine.”

In reality, this is a coordinated information operation based on artificial intelligence. The circulated videos are fabricated and have no connection to real Ukrainian servicemen.

Even upon careful visual inspection, the videos display characteristic signs of synthetic origin. The faces of the characters appear blurred and unnatural, facial expressions are limited, and lip movements are not synchronized with speech. People in the background often sit motionless without natural reactions, which is unusual for real footage in a public setting.

In some videos, errors in uniform details are noticeable: distorted or non-existent patches, incorrect equipment elements, as well as anomalies in the image of the Ukrainian state flag—incorrect color combinations or disproportionately large chevrons. Such artifacts are typical of generative models that recreate images based on training data but often produce significant visual inaccuracies.

For additional verification, StopFake conducted a technical analysis of the videos using the DeepFake-O-Meter tool developed by the Media Forensics Lab at the University at Buffalo (UB Media Forensics Lab). The service uses a set of independent algorithms capable of detecting signs of AI generation and digital manipulation.

Each video was examined by several detectors. Most algorithms showed an extremely high probability of artificial origin—ranging from 90% to 100%. Such indicators are characteristic of synthetic or substantially modified video content and do not correspond to the parameters of authentic video recordings.

Particularly revealing was the result of the LIPINC detector, which identified anomalies in lip movement. For real video containing speech, such a lack of natural articulation is unusual and mostly indicates speech synthesis or audio overlay without proper synchronization. Although some algorithms demonstrated moderate scores, the combined analysis of different models clearly indicates the use of artificial intelligence in the creation or manipulation of these videos.

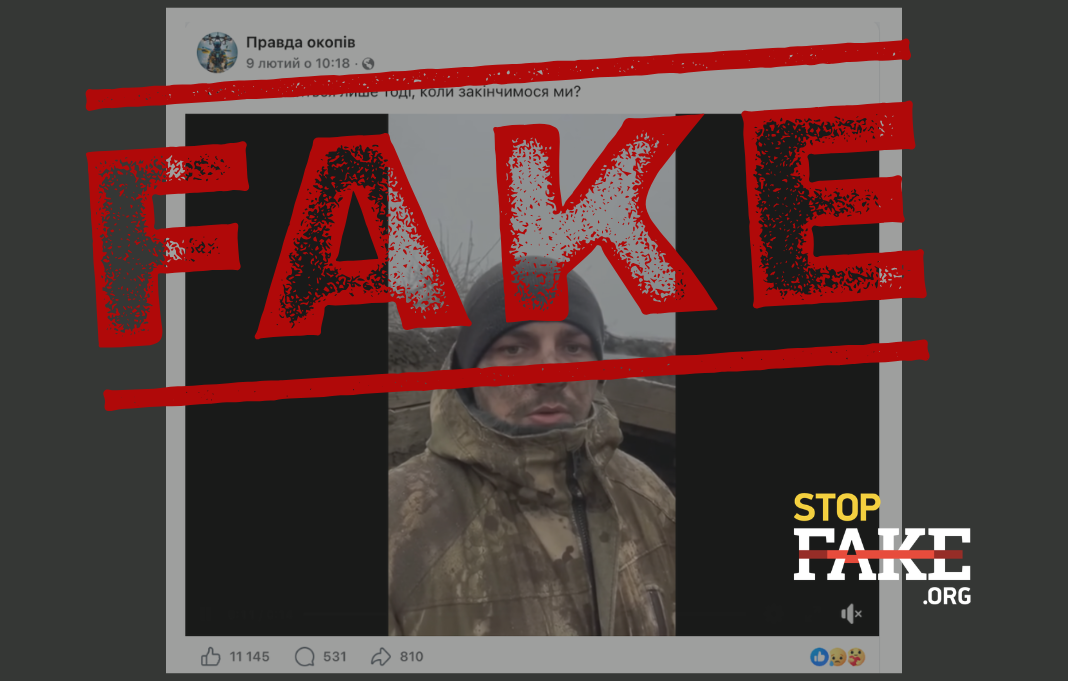

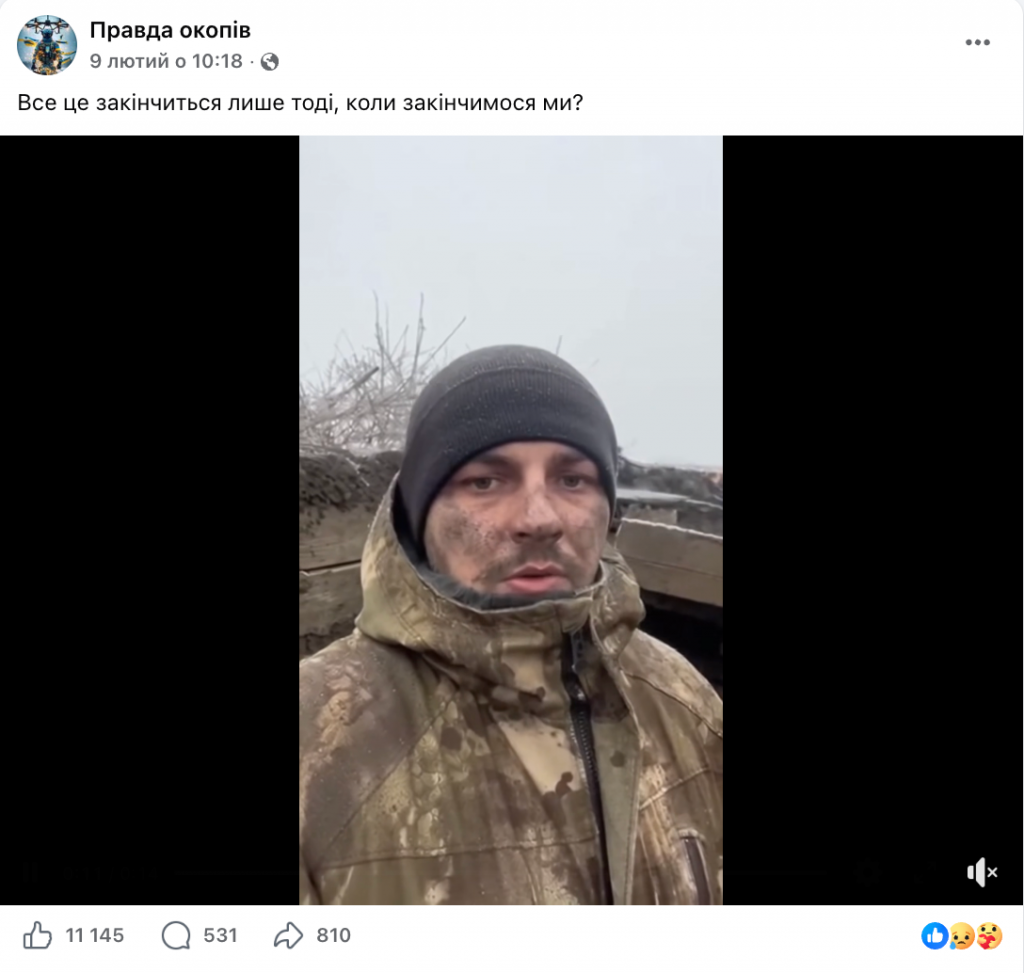

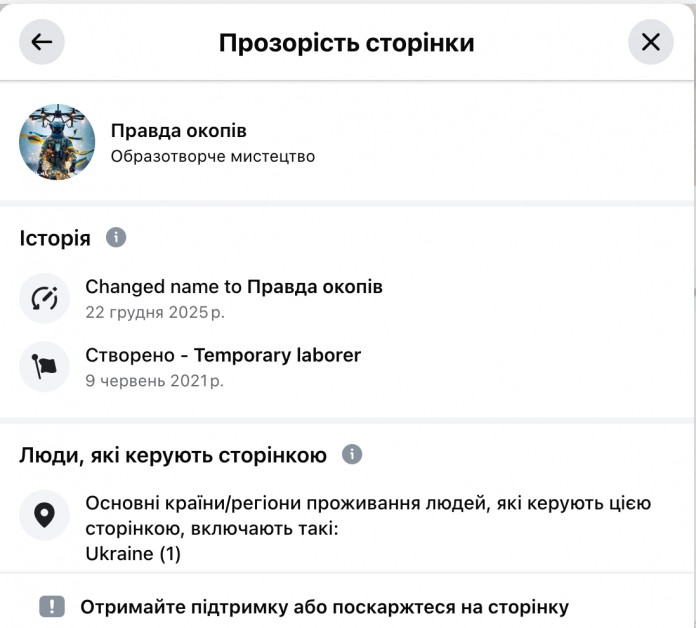

All the mentioned videos were published on the Facebook page “Pravda okopiv” (“Truth of the Trenches”). According to the page’s transparency data, it has existed since 2021, and in December 2025 it changed its name from “Temporary laborer” to “Pravda okopiv.” The page administrator is located in Ukraine.

Almost all the videos follow the same format: about 15 seconds long, featuring a “Ukrainian soldier” delivering emotional accusations against the authorities. The posts gather thousands and tens of thousands of reactions and comments. However, analysis of the comments shows a significant number of bot-like accounts supporting the content of the videos and amplifying radical calls for retaliation, an immediate “peace at any cost,” and laying down arms. This creates the illusion of mass support for such sentiments and distorts the real public opinion.

StopFake has previously documented similar information campaigns in the articles Video Fake: Ukrainians Complain About Zelenskyy’s Illegitimacy, Call for Ending the War at Any Cost and Fleeing the Country and Video Fake: Soldiers Confront the Government in the Verkhovna Rada. In both cases, the videos were artificial, created using neural networks.

The spread of such videos is aimed at undermining trust in the Ukrainian authorities and demoralizing society. The image of a “soldier from the trenches” helps the posts to seem like internal protest and a supposed “voice from the front.” This is intended to enhance the persuasive effect: if “even soldiers” are criticizing something, the claims appear more convincing.

At the same time, the narrative of the futility of resistance, the inevitability of an imminent “peace,” and the need to evade mobilization is being promoted. Such messages directly aim to reduce mobilization potential and increase internal tension.

Thus, the circulated videos are not evidence of real statements by Ukrainian servicemen but an example of the use of artificial intelligence technologies in information warfare. Their purpose is to create the illusion of internal division and dissatisfaction, sow distrust toward state institutions, and weaken the resilience of Ukrainian society.